Who is this for: Professionals without coding skills who need to scrape web data at scale. This web scraping software is widely used among online sellers, marketers, researchers and data analysts. If you have programming skills, it works best when you combine this library with Python. It is the top Python parser that has been widely used.

Why you should use it: Beautiful Soup is an open-source Python library designed for web-scraping HTML and XML files. Who is this for: developers who are proficient at programming to build a web scraper/web crawler to crawl the websites. I just put them together under the umbrella of software, while they range from open-source libraries, browser extensions to desktop software and more.īest 30 Free Web Scraping Tools 1. Here is a list of the 30 most popular free web scraping software. Also, if you're a data scientist or a researcher, using a web scraper definitely raises your working effectiveness in data collection. Luckily, there is data scraping software available for people with or without programming skills. It can be difficult to build a web scraper for people who don’t know anything about coding. It turns web data scattered across pages into structured data that can be stored in your local computer in a spreadsheet or transmitted to a database. Web scraping using Python What is web scraping?įor a data scientist, web scraping is yet another data extraction method especially for data that is only available on a website.Web scraping (also termed web data extraction, screen scraping, or web harvesting) is a technique of extracting data from websites. Here I am not talking about data present in a pretty HTML table which is comparatively easier to extract using many native stream reader functions in R/Python. A dedicated web scraper might be required if the data that we are extracting is primarily unstructured which could be text, images, videos, etc. and when the intended volume of data to be extracted is significantly large.Ī very common use case is extracting reviews from travel websites or getting user-posted content from social networking sites. Before even thinking to develop a scraping utility, do check if the data that you need is already available from other public sources or the website itself could be providing rest APIs using which one could extract data. In most cases, the APIs come with a lot of restrictions and might involve a paid subscription for extended usage.

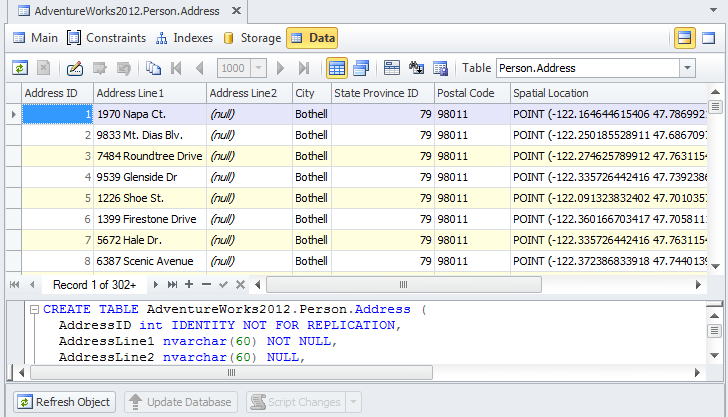

Approaches/tools for web scrapingĪ) Browser extension-based utilities. There are a couple of browser-based utilities like OutwitHub for Firefox and Web Scraper for Chrome.ī) Third-party tools - Tools like FMiner, Octparse, Dexi.io, etc.Ĭ) BeautifulSoup package - This python package comes with inbuilt functions for parsing an HTML page and extract the data by traversing the HTML tree using tags.ĭ) Scrapy - An opensource platform for scraping and developing advanced web crawlers. When you require a sizable and repeatable extraction of data for your project, usually most of the readymade tools run into some roadblocks. With BeautifulSoup/Scrapy we always have the flexibility with tuning the utility according to our needs. In the subsequent sections, we will discuss in detail about using BeautifulSoup package for scraping.Īlso, another advantage with writing your own tools is that one could incorporate a lot of data cleaning/pre-processing within this utility itself so that the first cut of the extracted data is moderately clean. Beautiful Soup - Python-based approachīeautiful Soup is a Python library for extracting data from HTML and XML files. The latest version available for download is Beautiful Soup 4.0 works best with Python 3 and above. BS4 can be installed like any other standard python library using pip-install beautifulsoup4. For HTML, BS4 programs can work with the standard HTML parser available within Python. But it can support other parser libraries like lxml which needs to be installed separately. How does it work? - ExampleĪ) In this example we are using the website, a tourism website. Using the next steps, we will extract data from this webpageī) Here we will extract every place listed in the page and extract the description and the user reviews posted for every pageĬ) The function get_html_to_soup accepts a generic URL as input and returns the beautiful soup object. This object supports several getter methods which can return data based on specific HTML tags.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed